Plugging the Reliability Sieve

Why the Most Capable Analytical Tools in Maintenance Are Structurally Blind to Their Highest-Leverage Intervention

There’s an old saying in data work: garbage in, garbage out. With AI’s ability to take data and run, people are now saying garbage in, disaster out.

But your poor data is not the primary threat.

The primary threat is garbage assumptions — baked into the model architecture by people who have no idea what the data means in reality and have never had to explain to a plant manager why the projected 1,600% return on investment predicted by the AI tool never panned out.

At the SAP Asset Management conference this week in Houston, a line item from one AI platform’s recommendations of maintenance interventions for a client site caught my eye: megger motors. Cost: ~$2,000. Projected annual savings: $34,000.

The ROI is eye-catching. The assumptions behind it are what should concern you. This is not a new AI problem. You get the same bad assumptions in manual consulting and internal business case planning — it is just that AI is going to produce this at a volume and with a seemingly data-validated credibility that will have executives salivating to “take the save,” when the save is somewhere else entirely.

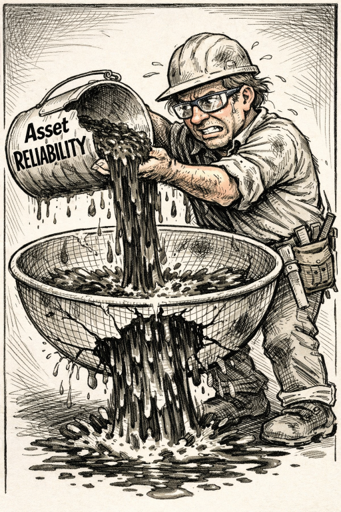

Let’s work through this example, because it illustrates something that goes far deeper than a bad projection. Most maintenance organizations are trying to fill a reliability sieve. They are getting a lot of “help” in how to increase the input volume, but almost no help in plugging the sieve. Sustainable ROI requires first plugging the sieve.

What Megger Testing Actually Does

A megohmmeter test applies DC voltage across motor windings and measures resistance to ground. When that resistance trends downward, it indicates that the insulation is degrading from moisture ingress, contamination, heat cycling, or age.

Like all condition monitoring, megger testing does not prevent degradation. There was already a failure mechanism at work on the insulation before the test was run. The only cost the megger prevents is the cost of escalation: letting a degraded motor run until the winding fails to core rather than catching it while something can still be done, from relatively inexpensive drying and treatment to rewinds short of a full replacement.

There are physics at play here: insulation degradation generally lives on one of the slowest P-F curves in the motor failure catalog. That long P-F interval is actually what makes periodic meggering useful — you usually have time to act. But it also means that the faults being detected are, by definition, slow-developing and often addressable through upstream conditions.

This brings out two important points that are missed in the garbage foundational assumptions.

One, the teams stringing this data model together do not understand the linkages between the active failure mechanism, the means to detect it, and what is actually required to correct that failure mechanism to achieve a save — and what that save will be. The save is not avoiding repair. The save is doing a repair instead of a full replacement — which is only realistic on larger motors in your fleet.

Two, the teams stringing this data model together do not understand upstream prevention, and critically do not know how to find the data on where prevention caused nothing to happen. The real save is in the null set.

What creates insulation degradation in the first place? Moisture ingress from improper storage. Motors sitting without space heaters energized. Contamination from inadequate housing protection. Thermal degradation from voltage imbalance, overloading, or blocked cooling passages. Every one of these has a prevention path that stops or slows the failure mechanism. The megger only finds the failure when it is happening. The real save is in proper storage practice, correct installation, operating condition monitoring. Address those, and you’ve eliminated a substantial portion of the failure population before the P-F curve even starts.

The Sieve

Think of it this way. Most reliability programs are pouring resources into filling a vessel: detecting degradation earlier, scheduling interventions more precisely, predicting remaining useful life with tighter confidence intervals. But the vessel leaks – profusely. Defects are introduced at every maintenance intervention: imprecise alignment, contaminated lubricant, improper torque, careless material handling, rushed installation. Defects continue to be introduced and exacerbated during equipment operation. No amount of filling compensates for the holes.

Industry studies consistently place winding and stator faults at roughly 25-40% of all motor failures — 26% in an IEEE IAS survey of over 1,100 induction motors, 36% in EPRI’s estimate, and 38% in another widely cited survey. For large high-voltage motors, the share is substantially higher: a 2022 PCIC paper puts bearings and stator windings together at 75-80% of HV motor failures, with one petrochemical-industry study finding stator windings at roughly 60% of failures in motors above 2,000 kW. These are not exotic edge cases. They are the dominant failure mode in the equipment class where the rewind economics apply. Within that population, insulation-to-ground faults represent the majority of winding failures — precisely the mechanism megger testing is designed to detect.

The share matters because it tells you how large the prevention opportunity is relative to the detection opportunity. If roughly a third of your motor failures are winding-related and a meaningful fraction of those are preventable through upstream practice — space heater compliance, thermal load management, cooling system reliability, moisture exclusion, alignment quality at installation — then the value of reducing failure incidence significantly outweighs the incremental save from catching failures earlier in their P-F curve.

Weibull analyses across process-industry equipment populations consistently show that a significant proportion — on the order of one in five — exhibit infant mortality patterns: failures clustering in the early period after a maintenance intervention. Where that infant mortality has been investigated, it is predominantly attributed to maintenance-induced causes rather than intrinsic equipment defects. The maintenance process is generating the failure modes it was designed to prevent. Each intervention is a new opportunity to introduce a defect. The bearing that starts failing three months after installation isn’t aging — it was installed into a degraded state and the P-F clock started at the moment the wrench came off.

Rough modeling suggests that cutting failure incidence in half through genuine upstream prevention delivers three to four times the annual return of a megger program operating on the same population at full failure rate. That ratio holds because prevention pushes out the failure mechanism enough to avoid half of the repair costs per year, while megger testing only improves the outcome within the failure population that already exists.

The Structural Problem: RCM and AI Are Both Blind to the Same Thing

The detail above is what is required to truly move the needle in a heavy industrial setting. The people who built the model didn’t know these things. They’re not plant engineers. They’re data scientists. They made assumptions about the causal and mitigation chains and their economics. The math is internally consistent. The assumptions are not connected to reality.

That’s not a data problem. That’s a knowledge problem. And AI doesn’t fix knowledge problems. It amplifies them.

But this goes deeper than one bad AI platform. The structural blind spot is shared by the most widely adopted analytical framework in maintenance: RCM itself. The RCM decision logic routes every failure mode through a fixed sequence: is there an on-condition task? A scheduled restoration? A scheduled discard? A failure-finding task? A redesign? Those are the five outputs the framework can produce. "Stop introducing this failure mode through poor execution" is not one of them. The framework assumes execution quality as an input — something that exists before the analysis begins — and never interrogates it as a variable. You can run a textbook RCM analysis on a motor population where 40% of failures are caused by contaminated lubricant at installation, and the decision tree will recommend vibration monitoring to catch the resulting bearing degradation. It will not recommend fixing the lubrication practice. It cannot. The category does not exist in the logic.

Experienced RCM practitioners will push back here, and they're partly right. The best implementations do address precision maintenance, operations, and defect elimination. But notice where that happens: it's carried in by knowledgeable practitioners who already understand execution quality, not generated by the RCM decision logic itself. And it is generally provided as a training and/or coaching afterthought, not a core systemic output. The FMEA identifies a failure mode, the decision tree routes it to a task category, and nowhere in that sequence does the framework ask "is this failure mode being introduced by poor execution, and would the highest-leverage intervention be to stop introducing it?" Precision maintenance arrives as practitioner wisdom brought alongside the process, not as an output the process produces. That distinction matters, because when the framework gets implemented by people who don't already carry that knowledge — or when it gets automated by an AI platform — the execution quality layer simply doesn't appear. It was never in the logic. It was in the practitioner.

That’s not an accident. It’s a structural feature of how RCM works. The decision logic routes failure modes to five possible outputs: on-condition tasks, scheduled restoration, scheduled discard, failure-finding, or redesign. “Ensure the motor is stored with space heaters energized and shaft protected from condensation cycles” is not a category. “Verify voltage balance is within 2% before declaring a motor healthy” is not a category. “Confirm lubricant cleanliness meets ISO 4406 before filling the bearing housing” is not a category. Execution quality is treated as an assumption — something the framework inherits, not something it produces.

AI predictive maintenance inherits the same blind spot, and then amplifies it. Machine learning models train on failure data: work orders, sensor readings, vibration spectra, downtime records. They find correlations between pre-failure patterns and failure events. The more sophisticated platforms — those incorporating physics-of-failure models, multi-sensor fusion, or fleet-level degradation tracking — do genuinely useful work in identifying developing faults. This is not an argument against condition monitoring or against AI.

It is an argument about what these tools cannot see. By construction, they cannot find evidence of defects that were never introduced in the first place. Precision alignment that keeps a bearing running for seven years generates no data. Contamination-free lubricant that prevents early-life bearing failure generates no data. The clean installation generates no work order. The avoided failure has no sensor signature. Prevention produces non-events, non-events produce no data, and data-driven systems systematically undervalue prevention as a result.

So the model doesn’t recommend fixing installation quality, because installation quality data doesn’t exist in the systems the model trains on. The gap isn’t in the algorithm. It’s upstream of the algorithm.

Accelerating Bad Advice

As I have written elsewhere on the site, the consulting industry has been selling bad reliability advice for thirty years, most of which does not get to the point of action.

AI hasn’t changed that. It’s made it faster and more confident. What used to take a consultant six weeks to produce in a PowerPoint now takes an AI platform six minutes to produce on a dashboard. The velocity of the advice has increased. The quality of the underlying assumptions hasn’t.

When a consultant hands you a flawed savings projection, there’s friction. Someone can challenge it in a meeting. The consultant has to defend the methodology. Assumptions get surfaced.

When an AI platform generates the same projection — validated by machine learning, backed by benchmarks, displayed with statistical confidence intervals — the friction disappears. The number looks authoritative because the machine said it (not to mention that someone in your organization spent a lot of real and influential capital on said machine and its outputs). Nobody asks how many full ground faults the model assumed per year. Nobody asks where the rewind/replace threshold was set. Nobody asks whether the upstream prevention pathway was modeled at all.

Garbage in, disaster out. Not because the data is dirty. Because the model was built by people who lack the domain expertise – and contrarian thinking – that result in real change. RCM has not moved heavy industry maintenance out of the 1980s despite decades of effort – perpetual sieve filling. Layering AI on top of RCM will not plug the sieve.

The Sequence That Actually Works

AI can help accelerate you on the right path, but it takes people who know what that right path is. That path is toward executing actual measures that prevent failure mechanisms, not just find them.

With good upstream prevention, what megger testing catches is the residual: failures that develop despite good practice. That’s real value. But it is bounded value, most prominent in production-critical assets — which you should already have on a route. The projected savings it generates might even be defensible, because it’s not fighting against a tide of introduced defects.

I would also argue that if the chain from foundational assumptions to execution quality is not in place, you’re not going to get much out of your megger testing either. By the time you catch something and deal with slow planning processes and break-ins, your value has likely dissipated as the tyranny of the calendar takes you from a dry and treat to a standard rewind. From a standard rewind to a burnout rewind. From a burnout rewind to a replacement.

The highest-leverage intervention in most reliability programs is execution quality at the point of work and the point of operation. These are the standards that extend the I-to-P interval — the window between installation and the onset of the first detectable fault — and no standard analytical framework currently in wide use will generate them for you.

Deploying detection in the absence of defect elimination and calling the projected avoided costs a reliability strategy is not a strategy. It’s filling a sieve and measuring how fast you pour.

Plug the sieve first.