Deploy PMs, Don’t Discover: Learn by Doing

Last week we walked through RCM’s origin story – how a methodology built for a handful of airlines analyzing 2,000 standardized items under FAA oversight became the default prescription for plants with 100,000+ heterogeneous equipment records maintained by a shrinking workforce following work orders that say “CHK PUMP.”

The response was predictable. There was a good amount of agreement, some comments about how valuable RCM can be, and at least one person who thinks I need to be educated.

No one asked the obvious question that I imagine many have: “If you think you’re so smart, what’s the alternative?”

Here’s the answer.

Your Equipment Is Not a Mystery

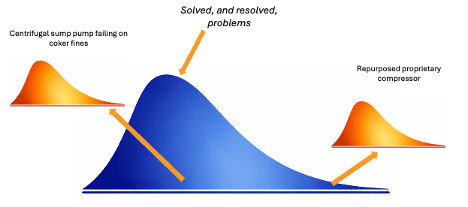

Picture your entire asset population sorted not by criticality but by how novel the failure profile is. What you get is a curve. The vast center of that curve — 80 to 90 percent of your equipment — consists of assets whose failure modes have been documented, catalogued, and resolved by the industry over the past century. Centrifugal pumps. Heat exchangers. Single-stage compressors. Gearboxes in standard service. The failure modes are in the OEM manual. They’re in API 610. They’re in every bearing manufacturer’s engineering guide. They have not changed since the last time your reliability team workshopped them.

On the far right of the curve: the genuine oddballs. The custom agitator in a fluid with uncommon rheological properties. The prototype equipment in a research and development installation. The repurposed compressor running a service no OEM datasheet anticipated. Failure modes and effects analysis is the right tool to derive equipment strategies for these tail assets – but these cases are few and far between.

There are some common equipment items in difficult – but not unique – services. The centrifugal sump pump in a coker unit that is getting fouled and torn up by fines is an example. It does not take a lot of analytical rigor – and certainly not a full FMEA – to identify mitigations for most of these problems. RCA is the tool to figure why your bad actors are failing repeatedly – but reliability engineers are too often spending 70 to 80 percent of their time workshopping the center of the curve — re-deriving what’s already known on equipment with universally documented failure modes — while the tail assets eat your lunch unattended.

FMEA is the right tool for tail assets. It is a waste of time on everything else.

Compile, Don’t Analyze

Here is something the RCM industry does not want you to think about too carefully: if you are not opening equipment up, the number of things you can and should do at a fixed interval is remarkably small.

You can pay consultants to FMEA to their hearts’ content, but in the end, the appropriate mitigations are the same ones a cynical technician or engineer would have told you to do in the first place. You monitor condition — vibration, temperature, pressure, oil analysis, thermography. You lubricate. You clean strainers. You replace filters. You verify operating parameters against design. You inspect for leaks, corrosion, and looseness. That is most of your PM program for most of your equipment, and best practice for every one of those tasks is already published, standardized, and agreed upon by the people who make the equipment and the people who write the standards.

The condition monitoring methods are known. The intervals are known. The lubrication requirements are in the OEM manual — not buried in a calculation on the exact bearing, but stated plainly: this grease, this quantity, this frequency. The filter replacement schedule is a function of differential pressure or calendar time. The strainer cleaning interval is driven by process conditions that your operators already understand – and the break-in strainer work orders confirm. None of this requires an FMEA workshop to derive. None of it is novel. None of it is site-specific in any way that changes the fundamental task.

The starting point is not analysis. The starting point is deploying the best practice that already exists — for every standard equipment class, right now, this week.

What you are deploying is not a guess. It is the accumulated knowledge of OEMs, industry standards bodies, and decades of field experience, packaged as a set of non-intrusive tasks with defined methods and intervals. You can create this in-house. You can use off-the-shelf AI to accelerate your drafting and even poll data sources and manuals. You can purchase it as a system from some providers. But, whatever you do, do not pay the provider to build it, de novo, on your time and money.

Once the best practice is deployed and running, then you refine. You don’t have to wait until you’ve run down every detail to get the vast majority of benefit from “good enough for now.” Your OEM manual tells you whether your specific configuration needs a shorter lubrication interval. Your CMMS history tells you which failure modes are actually occurring in your operating context. Your experienced technicians tell you which inspection points are inaccessible or which acceptance criteria don't match field reality. Your process conditions tell you whether the standard filter replacement interval is too conservative or not conservative enough.

These are calibration adjustments to a program that is already running and generating data — not prerequisites that must be satisfied before anything can be deployed. The five sources that the traditional approach treats as inputs to a twelve-month analysis become feedback that makes a running program better. The sequence is inverted: act first, then learn from the evidence, then adjust. Not study, then study some more, then maybe act.

The difference between "deploy best practice and refine" and "analyze comprehensively before acting" is not a difference in endpoint. It is a difference in when you start getting results — and when you start generating the execution data that actually drives improvement.

Deploy Now. Optimize From Evidence.

This is where most people hear "cut corners" or "skip the rigor." It is neither.

The best practice you deployed in the previous step is not a rough draft. It is the distillation of everything OEMs, standards bodies, and the broader industry have learned about how your equipment class degrades and what non-intrusive tasks detect or prevent it. That is not a guess. It is a starting point that most RCM workshops spend months arriving at — and that most plants never get past, because the binder never reaches the work order.

What the deployed program gives you that the workshop cannot is execution data. And execution data is a fundamentally different kind of knowledge than analytical prediction.

An RCM workshop tells you that bearing contamination is a failure mode for centrifugal pumps in hydrocarbon service. The OEM manual already said the same thing. Twelve months of structured field data tells you that Pump P-1105's drive-end bearing runs fifteen degrees hotter than the class baseline, that the oil-mist reclassifier is blocked, and that the vibration signature points to inner-race damage from a contaminated installation fourteen months ago.

The workshop gives you a list of possibilities. Execution gives you a diagnosis.

The conference room produces general knowledge about what might fail. A running program produces specific knowledge about what is failing — in your equipment, in your service, under your operating conditions, maintained by your crews. The first kind of knowledge is in published standards. The second kind can only come from doing the work. The organizations that deploy first are generating site-specific evidence that no amount of upfront analysis can produce. The organizations that analyze first are producing catalogues of failure modes that were already published. Both look like rigor. Only one produces new information.

The System Learns by Running

The document you deploy on day one is not the document you have on day 365. Every execution cycle generates structured findings data — actual measurements, condition assessments, triggered actions. That data tells you which criteria are catching real failures and which are generating noise. The reference improves by running, the same way any production system does.

This is not a vague commitment to continuous improvement. It is a two-loop architecture.

Loop One: Execution Level. Every PM closeout generates data. Bearing temperature trending from 172°F to 176°F to 182°F across three cycles? The trend is visible before the bearing fails. The reference gets updated. The next technician arrives knowing this pump’s drive-end bearing runs warm. The veteran’s knowledge doesn’t retire with him. It stays in the document.

Loop Two: Enterprise Level. Aggregate the findings data across an equipment class. If seal failures are clustering in pumps running more than fifteen percent off BEP, encode that as a strategy revision: add an operating point check to every reference in that class. One finding on one asset improves the program for fifty assets across three units.

The enterprise loop is where the analysis RCM promises actually happens — not as upfront prediction, but as evidence-based refinement. The question changes from “what might fail?” to “what is failing, and what does the pattern tell us?” That question can only be answered by execution data. It cannot be answered by workshops.

Four Reviews. No Binders.

The loops need governance or they decay into good intentions. Four defined cadences keep them turning:

Weekly: Findings triage. Did any PM finding generate a corrective work order that hasn’t been scheduled? If a technician records a finding and nothing happens, he records fewer findings next cycle. The loop dies from both ends.

Monthly: Trend review. Is any Criticality A asset showing three consecutive cycles with a parameter trending toward its threshold? Are conditional action triggers miscalibrated — too sensitive, or not sensitive enough?

Quarterly: Class review. Compare actual findings distributions against the assumptions in the class reference. If the reference assumed seal failures would show up as leakage but the data says they’re appearing first as bearing temperature elevation, the reference is wrong. Fix it. This is also where frequency decisions get revisited with evidence instead of opinion.

Annual: Strategy review. Given twelve months of site-specific execution data, is the maintenance strategy for each equipment class still appropriate? This is the only point where strategy-level decisions get made. Everything else is operational.

Notice what is absent from this structure: a workshop. A consultant. A binder. The governance lives in four defined meetings with named owners, specific inputs, and specific outputs. You already have meeting time on your calendar that produces less value than this.

But What About Rigor?

The engineers who are uncomfortable with this approach are the ones who equate rigor with upfront analysis. If you didn’t enumerate every conceivable failure mode before you started, it feels irresponsible.

I’d ask those engineers a question. If all of your rigor is spent on analysis and none of it reaches execution, where is the risk reduction?

A comprehensive FMEA that produces a strategy document that produces a work order that says “inspect mechanical seal for leakage and condition” has added zero rigor to the point of action. It has consumed months of engineering time and produced an instruction that a technician can interpret a dozen different ways. Forty-seven failure modes on paper. No acceptance criteria in the field.

Meanwhile, a compiled reference that says “measure bearing temperature with contact pyrometer; if above 180°F, generate notification for vibration analysis within seven days; do not restart until confirmed below threshold” — deployed in week one from the OEM manual — has added measurable rigor to every execution cycle from day one.

The question is not whether you are rigorous. The question is where you invest your rigor — in the analysis, or in the execution.

Start This Week

You do not need to boil the ocean. Here is the bootstrap:

Pick five assets. Your highest-criticality pumps, compressors, or heat exchangers — the ones generating the most reactive maintenance hours. Not five hundred. Five.

Pull the OEM manual and the CMMS history. If you don’t have the IOM, your distributor does. If the CMMS history is sparse, your senior mechanic is not. Use what you have.

Compile, don’t analyze. For each inspection point, write down what “acceptable” looks like: a number, a threshold, a binary pass/fail. Populate the acceptance criteria from the OEM specification and the relevant API standard. Leave asset-specific annotations blank. Let the crew fill them in from what they know.

Deploy the first version. It will not be perfect. It will not capture every failure mode. It will not reflect every nuance. But it will transform what happens at the point of execution from undirected inspection to structured, criteria-based assessment. And it will generate the data that tells you exactly how to make it better.

Run the loops. Review the findings weekly. Trend the data monthly. Revise the class reference quarterly. The first version is adequate. The second version is better. The third version, informed by two cycles of execution data, is substantively complete.

The first version takes a day. The RCM workshop takes eleven weeks. The first version generates execution data immediately. The RCM workshop generates a binder eventually.

Pick the one that produces information.

The failure modes are documented. The tolerances are published. The detection methods are known. The only thing the industry has been missing for forty years is a system that delivers this knowledge to the technician’s hands at the moment the work is being done — and then learns from what he finds.

Stop discovering what’s already known. Start deploying it. Let the system learn by running.

That is the alternative to RCM. And it starts this week.